|

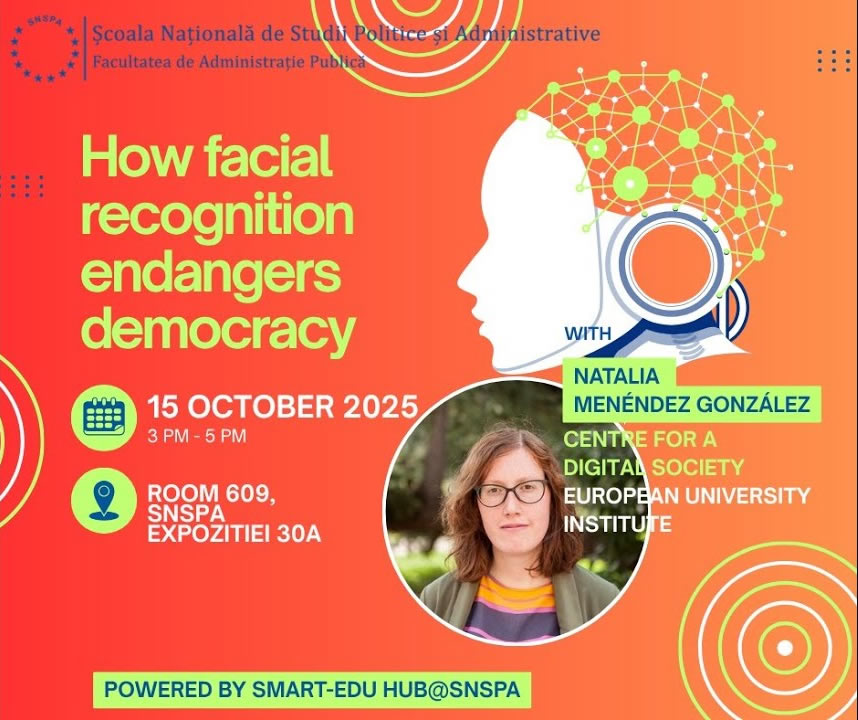

Natalia Menéndez González, Research Associate at the Centre for a Digital Society at the European University Institute, Florence, Italy

How facial recognition endangers democracy

15 October 2025

|

|

The workshop, introduced by Professor Cătălin Vrabie, focused on the democratic implications of facial recognition technology, featuring Natalia Menéndez, a legal scholar from the European University Institute. The discussion began with a technical clarification of what facial recognition entails: a probabilistic biometric system used for identification, verification, or categorization based on facial images. The speaker distinguished between traditional biometric methods and AI-enhanced facial recognition, emphasizing how artificial intelligence has increased accuracy, scalability, and opacity (the “black box” effect), while also enabling controversial applications such as emotion detection, lie detection, and even purported inference of sexual orientation or criminal propensity. These latter uses were presented as scientifically disputed and ethically troubling, often compared to discredited practices such as physiognomy. |

| |

|

| The core of the lecture examined how facial recognition can endanger democratic principles. Key risks include mass biometric surveillance, the erosion of privacy, deterrence of free expression and assembly, and algorithmic discrimination—particularly against racial minorities and vulnerable groups. Several real-world examples were discussed: the use of facial recognition to enforce hijab laws in Iran, to monitor quarantine compliance in Russia (later repurposed for surveillance), to identify individuals in wartime or protest contexts, and to support voting systems in certain countries. Cases of wrongful arrests due to automation bias and racially biased training datasets illustrated the tangible harms of overreliance on the technology. The discussion concluded with a nuanced position: while facial recognition poses serious threats to dignity, fundamental rights, and democratic governance, its impact ultimately depends on governance, proportionality, and design choices. The speaker advocated for responsible regulation, technical safeguards (such as synthetic data to reduce bias), and a clear distinction between analytical support tools and autonomous decision-making systems. |

| |

|

YouTube recording here.

|